Blogs

Explainable AI in Finance: Why Observability and Trust Matters

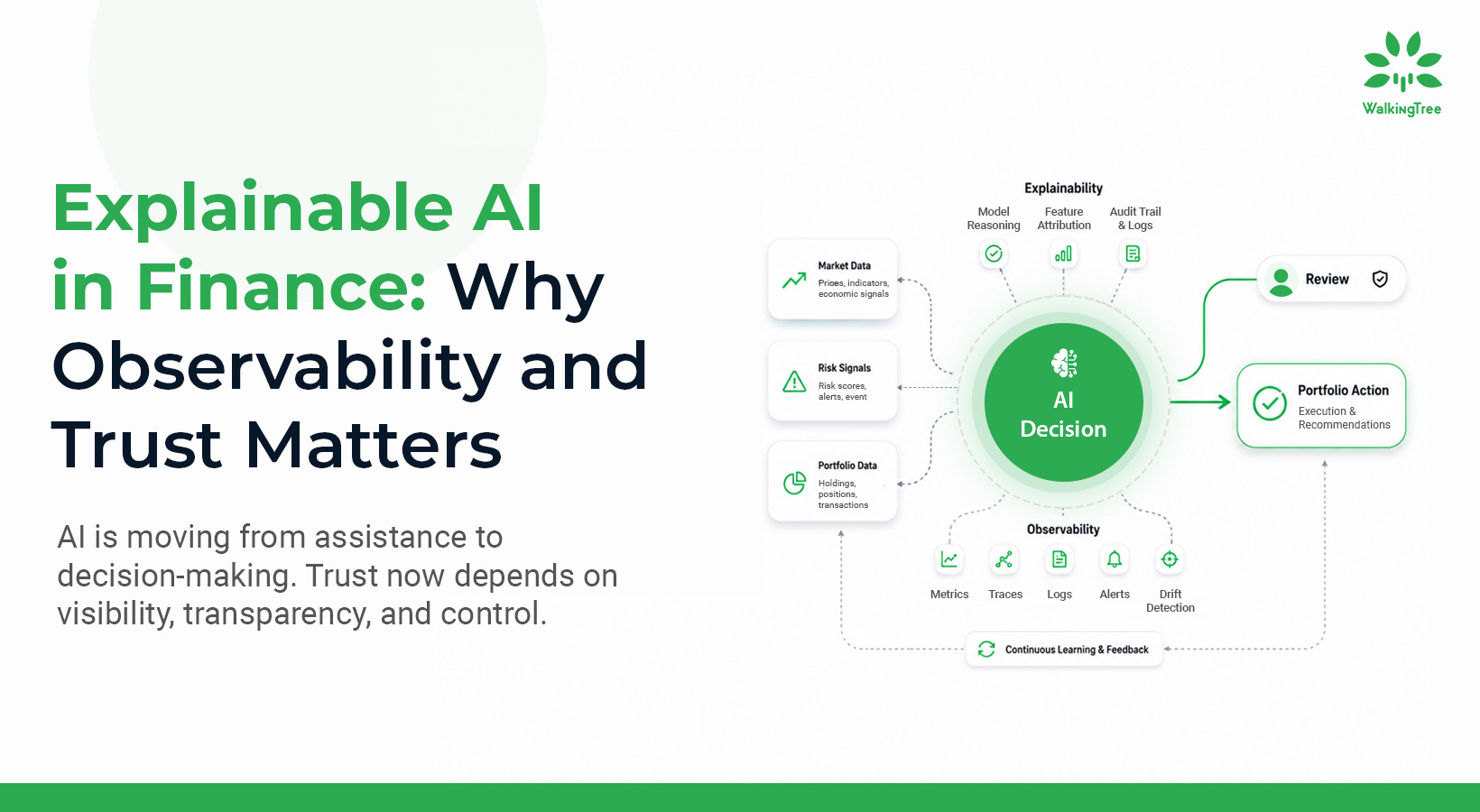

AI is no longer assisting wealth managers. It is starting to make decisions on their behalf.

From portfolio rebalancing to risk evaluation and real-time market response, agentic AI systems are moving from support to control. They can operate across thousands of client portfolios simultaneously, delivering scale, speed, and personalization that human teams simply cannot match.

But production deployment has exposed a hard truth many firms are still underestimating. According to the EY 2025 GenAI in Wealth and Asset Management Survey, 95% of firms have already scaled GenAI to multiple use cases and 78% are actively exploring agentic AI. Performance alone is no longer enough. Systems must operate with visibility, transparency, and control. Yet regulatory and compliance complexities surprised 86% of those same firms. Deployment speed and governance readiness are moving in opposite directions.

This is not a tooling gap. It is an architectural gap.

That gap is where the real risk lives.

Autonomy without visibility is risk. Intelligence without explainability is liability.

If AI is going to manage real capital, regulatory exposure, and client trust, observability and explainability are not backend features. They are strategic imperatives for explainable AI in finance.

| The Business Risk of Black-Box Autonomy

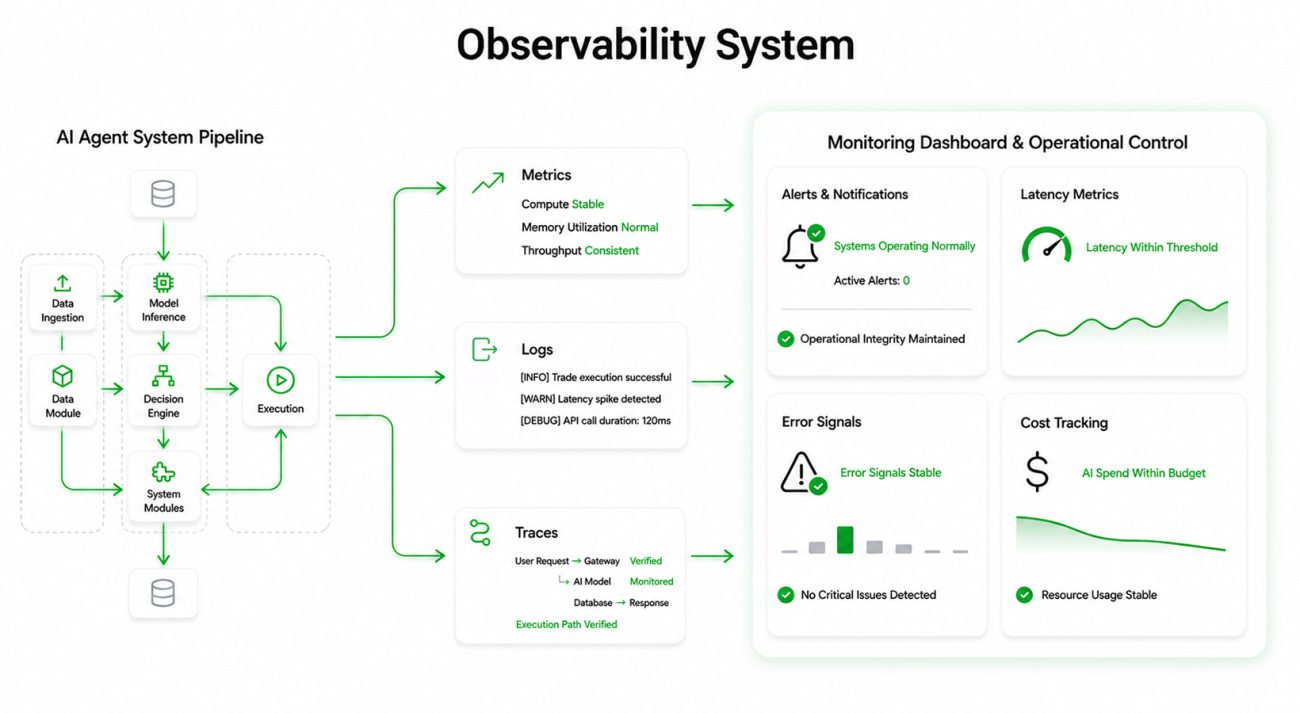

Consider a scenario that is already playing out in early deployments.

An AI-powered portfolio agent automatically reallocates 15% of a client’s equity holdings into fixed income after detecting volatility signals. The move is technically correct. But the client calls their advisor asking why it was done.

If the advisor cannot clearly explain what triggered the decision, what data was used, what risk thresholds were crossed, and whether compliance policies were followed, the organization does not just have a technology gap. It has a trust gap.

This is why explainable AI in finance is becoming essential for firms deploying autonomous systems at scale. And once lost, it is expensive to rebuild.

| Observability: Operational Confidence at Scale

As AI agents move from pilots to production, leadership needs answers to questions that go far beyond model performance.

Are agents behaving consistently across thousands of portfolios? Are market data integrations reliable? Is model latency affecting execution timing? Are compute and token costs scaling predictably? Are we exposed to systemic drift across strategies?

These are not engineering questions. They are business continuity questions.

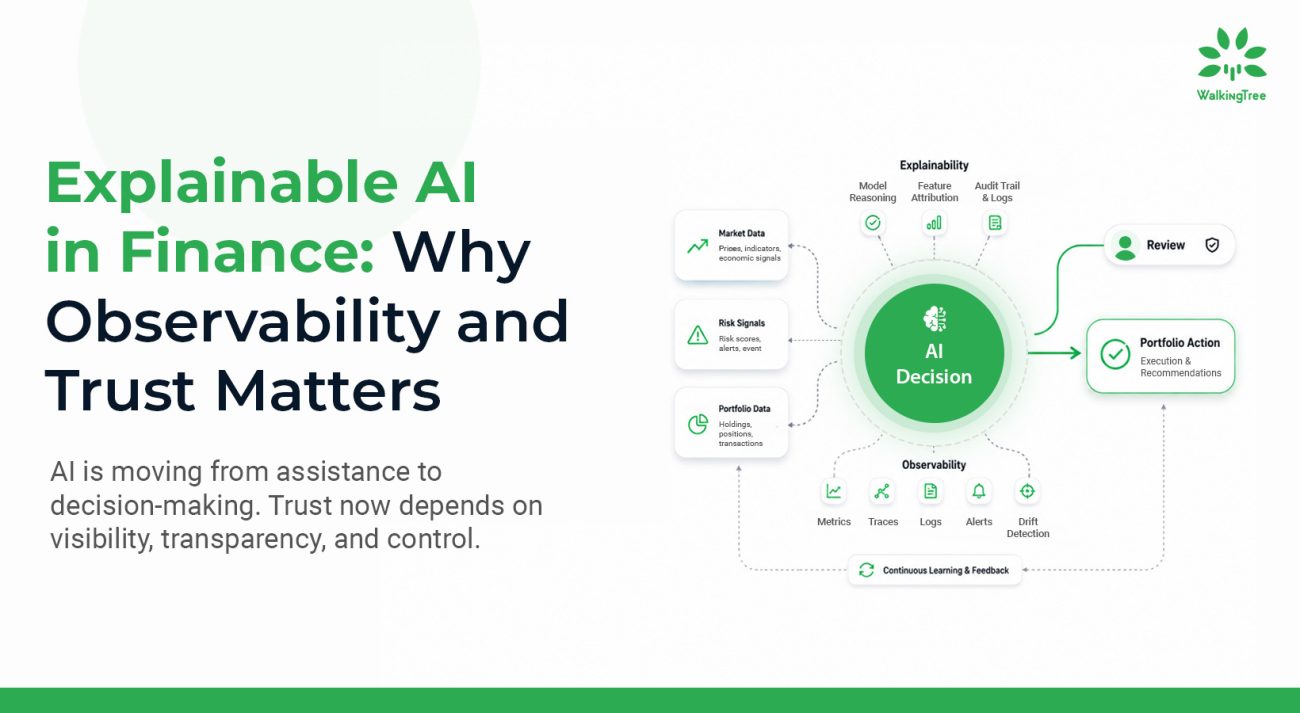

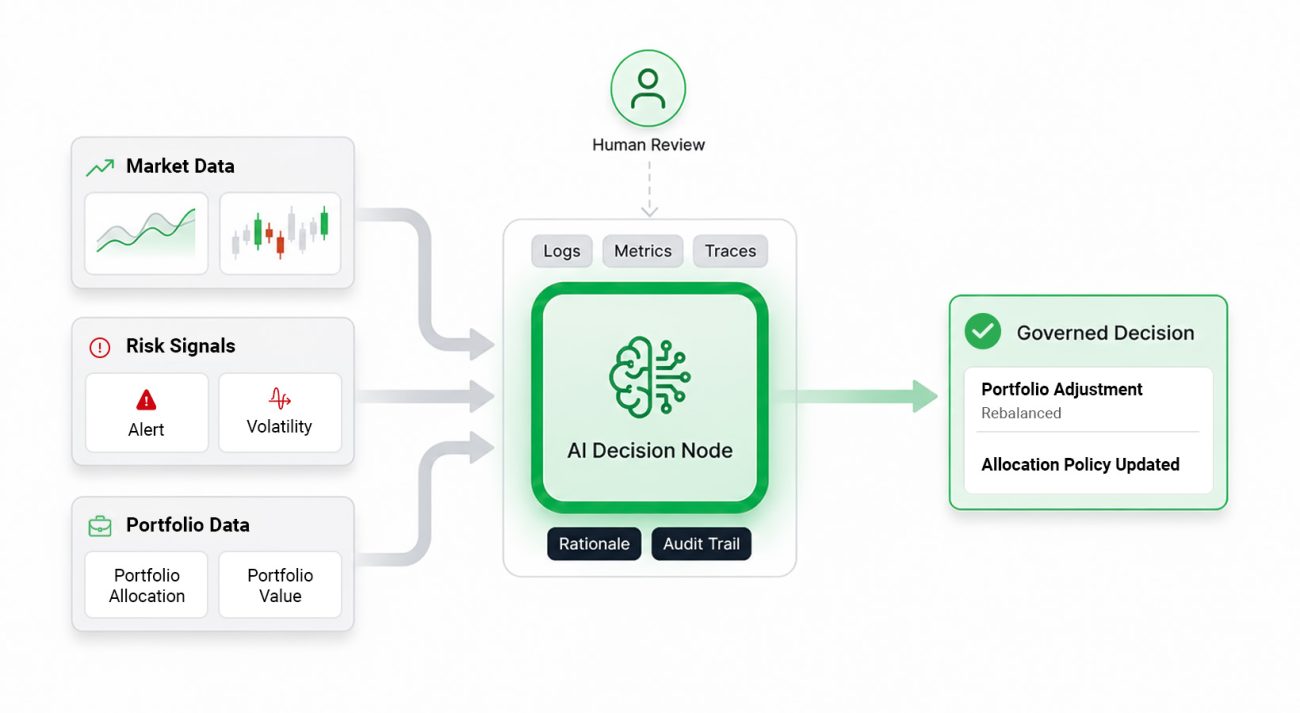

Observability provides the operational nervous system of an autonomous AI platform. Through real-time telemetry, trace logs, error tracking, and policy monitoring, organizations gain early anomaly detection before client impact, cost transparency across AI usage, performance benchmarking across strategies, and continuous regulatory alignment monitoring.

Observability in production AI systems is built on three capabilities: telemetry, error tracking, and real-time alerting. Together, they provide continuous visibility into system behavior, from performance metrics and agent state transitions to integration failures and policy violations. This allows teams to detect issues such as model drift, unreliable data feeds, or execution anomalies before they impact client portfolios.

In wealth management, this means catching model drift in risk scoring or unexpected behavior in trade execution agents before they lead to client losses or regulatory scrutiny. Observability is what allows leadership to scale autonomous systems with confidence rather than assumption.

For a deeper look at what platform-level observability means for production AI deployments, the WalkingTree blog on Scaling AI Agents in Production covers this architecture in detail.

| Explainability: The Foundation of Regulatory and Client Trust

Explainability is becoming a foundational requirement for explainable AI in finance. Observability tells you what the system is doing, while explainability tells you why.

In wealth management, decisions are not just technical. They are fiduciary obligations that must be justified, documented, and defensible. Every portfolio adjustment, risk shift, or allocation change must be explainable to regulators, advisors, and clients alike.

Explainability transforms AI decisions into structured, auditable narratives. Why was a portfolio rebalanced? What risk indicators triggered the action? What policies were applied? What historical data influenced the recommendation? These are not optional disclosures. They are the basis of accountable AI in financial services.

When explainability is built into the architecture from the start, decision traces are recorded at each step, rationale is summarized in business-readable language, tool usage and external data interactions are logged, memory states are captured, and audit trails are immutable.

Explainability in agentic systems is enabled through decision tracing, rationale generation, and audit logging. Each action taken by the system is recorded with its underlying logic, supporting data, and policy context. Instead of opaque outputs, stakeholders see clear, structured explanations that connect decisions to risk signals and business rules. This creates a defensible record for every action taken by the system.

The outcome is defensible autonomy, where every action taken by the system is supported by a clear, auditable rationale.

| Human Oversight and Governance in Autonomous Systems

Autonomy does not remove human responsibility. It changes where and how that responsibility is applied.This governance model is central to explainable AI in finance, where accountability cannot be separated from automation.

In production environments, autonomous systems are designed with built-in visibility and control. Decisions are not hidden. They are traceable, reviewable, and auditable at every step.

Human oversight operates through:

- policy enforcement layers that constrain decisions within defined risk boundaries

- approval and escalation workflows for high-impact actions

- monitoring dashboards that provide real-time visibility into system behavior

- exception handling mechanisms that surface anomalies for review

This ensures that autonomy operates within governance, not outside it. The system acts independently, but never without accountability.

In practice, this means autonomy increases only as trust is earned, not assumed.

| Auditability and Memory: The Backbone of Scalable AI Systems

Scaling autonomous AI systems requires more than decision-making capability. It requires a persistent and reliable system of record.

Every action taken by the system must be captured, including:

- decision logs and execution steps

- tool interactions and external data usage

- historical context and memory states

- timestamps and execution timelines

This creates a complete audit trail that supports both internal validation and regulatory review.

Mature systems go further by enabling replay and timeline analysis. Teams can reconstruct decisions step by step, understand how outcomes were reached, and validate behavior against policies and expectations.

Without this level of traceability, autonomous systems cannot operate in regulated environments.

| From Visibility to Trust: Combining Observability and Explainability

Taken separately, observability helps engineers and explainability helps humans. When combined, the impact compounds across every stakeholder group.

Developers can pinpoint issues quickly because they have rich telemetry tied directly to decisions. Compliance teams can audit autonomous decisions against governance policies without reverse-engineering model outputs. Advisors and clients gain confidence in AI recommendations because they see clear reasoning rather than opaque outputs. Business leadership can scale agentic systems knowing they have both operational insight and accountability built into the production workflow.

This dual approach transforms an AI system from a powerful experiment into a trusted financial service.

Consider a concrete example. A portfolio rebalancing agent detects that market volatility has spiked, triggering a risk threshold breach. Observability systems catch rising error rates in external price feeds and immediately alert the operations team. The explainability pipeline simultaneously documents why the agent shifted exposure out of equities, including the full decision logic and supporting metrics, and surfaces this to a compliance dashboard. Leadership reviews, validates, or overrides the decision before any client is affected.

Without observability, this becomes a silent failure discovered after the fact. Without explainability, it becomes an unaccountable trade with no defensible record. With both in place, it becomes accountable autonomy that earns long-term trust from clients, advisors, and regulators.

Together, these capabilities transform AI systems from opaque decision engines into accountable financial systems that support explainable AI in finance.

The architecture below illustrates how observability and explainability integrate with agent runtime systems to create a fully traceable and accountable AI workflow.

| The Competitive Divide in AI Adoption

The gap is no longer between firms using AI and those that are not. It is between firms building accountable AI systems and those deploying opaque ones. That distinction will define which organizations scale successfully and which stall under regulatory pressure.

Most firms deploying AI in wealth management are focused on model accuracy and workflow automation. Few are investing equally in decision transparency, governance architecture, and runtime observability.

The gap between the two groups is measurable. According to McKinsey’s 2025 State of AI report, 88% of organizations use AI in at least one business function, yet only 39% report enterprise-level financial impact. Adoption has scaled, but outcomes have notThe missing link is not model performance. It is governance, observability, and explainability, which are foundational to explainable AI in finance.

The firms that build observability and explainability into their agentic AI foundations now will hold a structural advantage that compounds over time: faster regulatory approvals, stronger advisor confidence, increased client retention, reduced compliance exposure, and the ability to scale autonomous systems without introducing systemic risk.

WalkingTree’s AlphaTree reflects this shift, with observable orchestration, explainable decision chains, and full audit logging built into the core architecture.

In an industry built on credibility, explainable and observable AI is a brand differentiator. Not a backend implementation detail.

| The Shift from Intelligent AI to Accountable AI

Agentic AI will redefine wealth management. It will scale decision-making, improve responsiveness, and unlock new operational efficiency.

But autonomy alone is not enough.

Systems that cannot be observed, explained, and governed will not earn the trust required to support explainable AI in finance at scale.

The organizations that lead this transition will not be those with the most advanced models, but those with the most accountable systems.

If your organization is building or scaling agentic AI in wealth management, the question is not whether decisions can be automated.

The question is whether they can be explained, governed, and defended.

Start the conversation with WalkingTree

About Abhilasha Sinha

Abhilasha Sinha leads the Generative AI division at WalkingTree Technologies, leveraging over 20 years of expertise in enterprise solutions, AI/ML, and digital transformation. As a seasoned solutions architect, she specializes in applying AI to drive business innovation and efficiency.

View all posts by Abhilasha Sinha